Automated detection: Dutch Customs shares its experience

12 October 2022

By the Customs Administration of the NetherlandsIn our “Pushing Boundaries” vision, we described our “dot” on the horizon, a helpful focal point when dealing with challenges we are, and will be, confronted with. The most prominent one is how to cope with a rising number of declarations and, consequently, a growing need for inspections. At any given time, we need to find the right balance between Customs supervision and trade facilitation; our aim is to implement robust controls, but with as little administrative hassle and regulatory pressure on business as possible, and with minimal delays to the logistic flow.

First and foremost, a solution is sought for sub-dividing trade and trade flows into those of trusted actors and those of unknown actors. In our vision, we are able to verify – for all forms of transport entering or leaving the European Union (EU) Customs territory – whether the required reports and declarations have been submitted, thereby enabling Customs to obtain a solid overview of each incoming or outgoing container and pallet. Obviously, we only have so many officers, and simply scaling up activities endlessly is not a solution. Achieving this is based on processing information from declarations and other sources, and relies on the use of state-of-the-art information technology (IT).

Second, a solution is sought to enable the Administration to carry out more X-ray inspections overall by enhancing scanned image processing capacity. With this in mind, we participated in the Automated Comparison of X-ray Images for cargo Scanning (ACXIS) project. This was a research project funded by the EU under the 7th Framework Programme, which ran from 2013 to 2017. Besides us, ACXIS brought together the Swiss Federal Laboratories for Materials Science and Technology (EMPA), the French Alternative Energies and Atomic Energy Commission (CEA), the Fraunhofer-Gesellschaft research organization, Smiths Detection, APSS Software & Services and Switzerland Customs.

ACXIS

One objective was to develop an automated support system for image analysts. Participants worked in their respective areas of competence to deliver the various components required to create such a system. Some worked on the data gathering aspect, others on the annotation of the images, others on building automated target recognition (ATR) algorithms and training them with data to create models, and others on infrastructure development and deployment.

ACXIS resulted in the development of the first machine learning model that could successfully detect threats and anomalies in large maritime containers. The model was then deployed in a test environment. Unfortunately, once the project was over, the project partners did not manage to maintain ties, and this resulted in a situation where every partner was left with the components it had been working on.

Return to the starting point

Since the ACXIS project came to an end, we have been working to create a comparable development process resembling the one established under the project. This has included collecting and storing X-ray images and related data, setting up a data science unit capable of building machine learning models, altering our information technology infrastructure, cooperating with suppliers to deploy the models in an experimental environment to allow for testing by image analysts and, last but not least, keeping the staff using X-ray technology abreast of all developments. We soon realized that the Administration did not produce enough images of cargo also displaying a threat in order to develop models. Thankfully, we were able to cooperate with our Australian, Belgian and Brazilian colleagues on the exchange of X-ray images and related data and this cooperation is going on.

Images were also collected by the officers operating X-ray scanners. In the future, these officers will also have to annotate the images (in other words, describe what is in the image); however, during the experimental phase this job was carried out by the Customs laboratory staff. The Data Science Unit then built detection and classification models using pre-trained models (a pre-trained model is a model that was trained on a large benchmark dataset to solve a problem similar to the one we want to solve). Guided by the Business Operations Directorate, the scanner suppliers deployed the models on the machines used by the Administration. In parallel, the Information Management Directorate worked on developing the information technology infrastructure which will make it possible to move from the experimental to the operational stage.

The experimental phase is intended to enable every directorate in our Administration and each of the suppliers to identify requirements. Aware that individuals possess specific knowledge and that nobody knows everything, we have set up cooperation mechanisms between Customs units which are new to each other, with suppliers and with Customs administrations to allow, for example, for the exchange of X-ray images or of expertise in developing models.

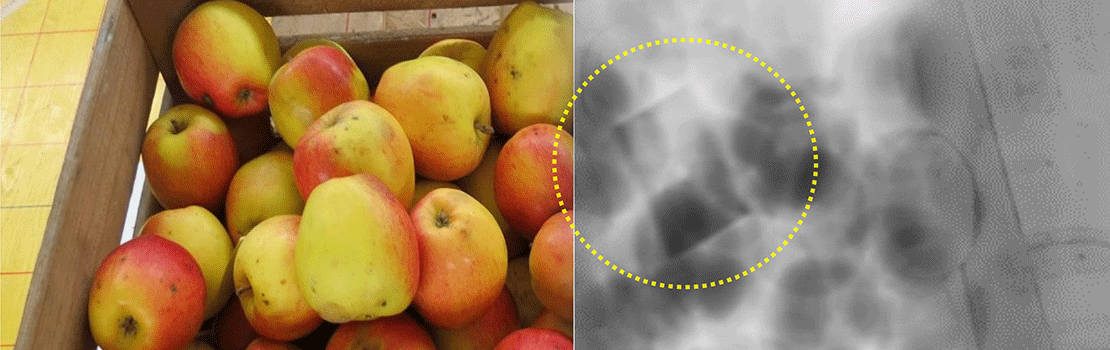

At the WCO Technology Conference and Exhibition, due to take place in October 2022, we intend to demonstrate, together with our suppliers, how the models we have developed enable a machine to detect the presence of pills in envelopes and packages. Two possible outputs are produced: a rectangle around the items, which appears on the analyst’s screen on top of the image (detection model), or a probability score for the presence of pills in the image, which may be reported to the risk engine (classification model).

Progressing through international cooperation

A lot more could be achieved with the involvement of other Customs administrations. Let’s imagine two more administrations join the project. We will call those administrations B and C, and our administration A. All three administrations, A, B and C, could work on the collection and annotation of X-ray images. A could use the images to develop models which would be deployed in A, B and C with the support of their respective suppliers. The same goes for B and C. Each administration could choose to work on specific items which they consider a priority or which are challenging in terms of detection.

For such cooperation to take place, some requirements have to be met. First, scanned images and associated metadata must be provided in the WCO unified X-ray file format for non-intrusive inspection (NII) devices, codenamed the Unified File Format (UFF).[1] Moreover, the X-ray images should be annotated in a unified way.

Secondly, there is a need to harmonize the way ATR algorithms are deployed with suppliers, for example when it comes to defining model containerization and preparing software environments to allow for plug and play of containerized models.[2] As was the case for the UFF, such work could be steered by the WCO Technical Experts Group on NII (TEG-NII), which is open to all WCO Members and NII industry players.

Thirdly, we must agree on unified methods for setting up a dossier on the source data, the training and the algorithms. Such information will soon be mandatory under, for example, EU legislation. It will also facilitate the use of machine learning models on a wider scale.

Get involved

We encourage Customs administrations to make available their plans in terms of innovative use of technology, and to use existing platforms to discuss potential collaboration. Even if we do not share the exact same strategy, we believe that cooperation will prove essential to reach our respective goals. There is only so much a single Customs administration can accomplish on its own, given that resources are scarce. We also need to come together to develop standards and harmonized working methods when it comes to the development and deployment of machine learning models. Such work is crucial to facilitate the sharing and the wide implementation of such tools. We therefore also call on other Customs administrations to support our proposal to carry out this work through the TEG-NII.

More information

Douane.DLK.Internationaal@douane.nl

[1] https://mag.wcoomd.org/magazine/wco-news-89/customs-and-industry-collaborate-to-develop-a-unified-file-format-for-non-intrusive-inspection-devices

[2] “Containerization is the packaging of software code with just the operating system (OS) libraries and dependencies required to run the code to create a single lightweight executable – called a container – that runs consistently on any infrastructure”, see https://www.ibm.com/cloud/learn/containerization.